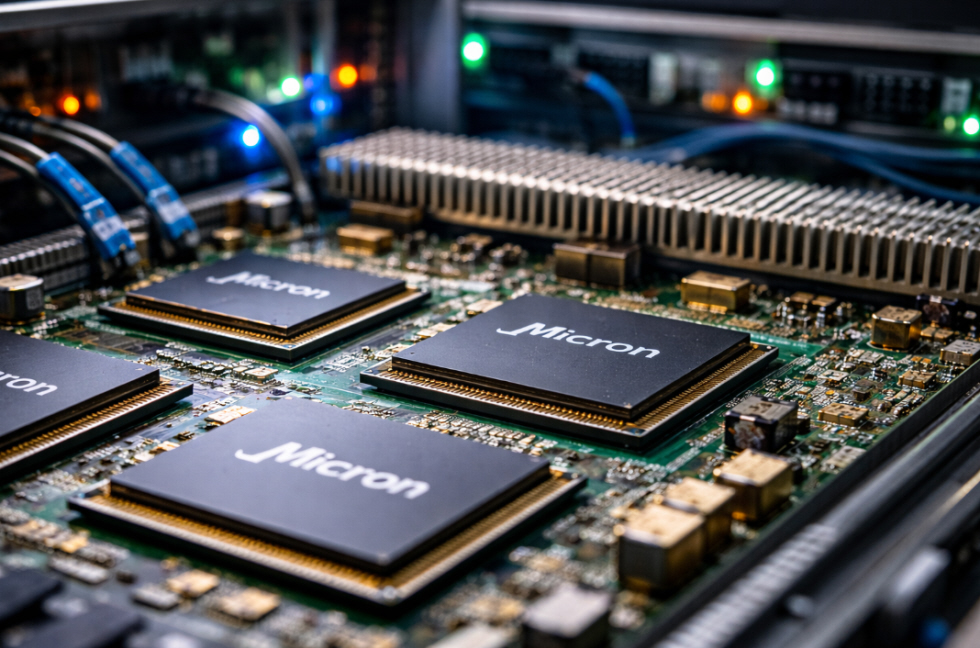

Driven by the rapid expansion of artificial intelligence, U.S. memory chipmaker Micron Technology delivered a sharply higher-than-expected forecast for revenue and profits for the current quarter, surprising financial markets. The Boise, Idaho–based company attributed the strong outlook to soaring demand for its high-bandwidth memory (HBM) chips, which are essential components in AI servers and specialized computing hardware. In a statement released after the close of U.S. markets, Micron said it expects revenue of approximately $18.7 billion, with a possible fluctuation of $400 million, far above the $14.2 billion average forecast by analysts, according to LSEG data.

The company also projected adjusted earnings of $8.42 per share, significantly exceeding the $4.78 per share expected by the market. Against this backdrop, Micron confirmed a strategic shift toward high-margin, high-performance storage solutions tailored specifically for artificial intelligence applications. As part of this move, the company plans to gradually exit the consumer memory segment, which includes chips used in personal computers and smartphones.

Analysts have welcomed the decision, noting that the consumer memory market offers relatively weaker growth and lower margins compared with the rapidly expanding AI-driven segment, where demand remains strong and profitability continues to rise. Micron’s announcement comes at a time of growing supply constraints across the global memory chip market, affecting both AI servers and traditional consumer electronics. The accelerated buildout of AI data centers is absorbing a significant share of global semiconductor production capacity.

As a result, electronics retailers in Japan have begun limiting hard drive purchases per customer, while smartphone manufacturers are warning of potential price increases. Some economists caution that persistent supply shortages could fuel inflationary pressures and delay planned investments in AI infrastructure worldwide. In addition to Micron, the global memory chip market is dominated by South Korean companies Samsung Electronics and SK Hynix, both of which are also seeking to expand production capacity.

However, uncertainty remains over how much of that additional capacity will be allocated to high-bandwidth memory chips versus traditional memory products. HBM chips, designed to cache and process massive volumes of data at extremely high speeds, have become a critical component for next-generation AI data centers. According to estimates from Micron rival SK Hynix, the global HBM market is expected to grow at an annual rate of around 30 percent through 2030, underscoring the central role artificial intelligence is set to play in the technology industry over the coming decade.